Multiculturalism is Ethnocentrism

The ugly history of a divisive ideology

Every year I teach a freshman seminar on American society at the little liberal arts university where I am employed. And every year, we spend time discussing the 9/11 attacks. Many of these students were not yet born on September 11, 2001. They know few facts about that day’s events. But they know some other things. When I ask students in the class to describe the single most important lesson learned from 9/11, invariably someone will suggest that it has to do with the extremity of anti-Muslim bias in America. That student will allude to the appalling frequency of hate crimes against American Muslims in the aftermath of the attack. At least some other students will agree, and none of them ever challenges the claim.

Their belief is not consistent with reality. According to the FBI, hate crimes against Muslims did increase post-9/11. But the annual number of such crimes never reached three figures and has typically stayed well under fifty. At its highest level in 2001, the risk for the average Muslim-American was somewhere around 1 in 31,000. For the sake of comparison, the average American faces risks of dying in a fall or being assaulted that are, respectively, three and seventy-five times higher. And the definition of ‘hate crime’ is sufficiently elastic to include such things as verbal insults or the writing of anti-Muslim slogans on walls.

It is not reality that has convinced students to infer from an Islamist attack on American soil that America really hates Muslims, but the splenetic and all-pervasive doctrine of multiculturalism. Just three years after 9/11, Samuel Huntington in Who Are We? (2004) neatly summarized the doctrine as consisting of the following claims: 1) American society contains many different cultural, ethnic, and racial groups; 2) each one of these has its own distinct and unique culture; 3) the white majority in the country has forced minority cultures to accept and adopt the dominant culture against their will; and 4) justice demands that these aggrieved victim cultures be liberated, which requires a full rejection of the melting pot in favor of a national hodgepodge containing multiple and mutually irreducible cultures.

The first point is incontestably true, the second at least plausible. But the third and fourth are not just ideological but hostile to America’s foundational principles.

If, in the current moment, multiculturalism seems an unquestioned dogma in elite American culture, there is still considerable resistance to it at the more popular level. A recent Rasmussen poll showed that more than half of all Americans believe immigrants should prioritize assimilation to the existing American culture over retaining their native traditions. And more than 80% believe some combination of the two is ideal. Only a third believe retention of native culture is most important.

Nonetheless, a third of the population can have an outsized effect on the whole society, especially if they are overrepresented among the upper classes. And they are. Mounds of data show that those who view multiculturalism positively believe white racism is a massive barrier to the advancement of non-whites, and that those who think of traditional American culture in negative terms are more frequently found among the college-educated and the well-to-do. How did we get to this point?

The Invention of Cultural Relativism

The short answer is “blame the ‘60s.” The turbulent developments of that decade did culminate in full-blown attempts to install multiculturalist curricula in public schools. But the ’60s are, as the logicians say, necessary but not sufficient to really understand the rise of multiculturalism. We must go back to the first decades of the 20th century, into the esoteric debates about American social science which were then beginning to emerge.

The fight was over the infamous phenomenon of ‘social Darwinism.’ There is perhaps no more misused term than this one in the whole history of American intellectual life. It was first popularized as an ideological battering ram by the American historian and former Communist Party member Richard Hofstadter in his deeply skewed 1944 book, Social Darwinism in American Thought. Hofstadter’s work was influential: the term is now frequently wielded to denounce, on often spurious charges of racism, a wide swath of pre-WWI American social scientific thought.

The truth of the matter is somewhat more complex. Darwin’s 1871 book, The Descent of Man, sparked early speculation about how to use the theory of natural selection to understand human society. But in the absence of the empirical data necessary to ground such postulation—and in particular without knowledge of the mechanism by which genetic heritage is transferred—some pushed Darwinism into scientifically uncharted directions. The result, however, was not a monolithically awful body of politically reprehensible and empirically falsifiable ideas. It was instead a mixed bag, running the gamut from straightforwardly racist ideologies dolled up with the false veneer of biological science to legitimate insights into human society. At least some of these insights remain compelling and scientifically significant today.

Yale’s William Graham Sumner represents the most trenchant of the thinkers today tarred as ‘social Darwinists.’ Born in 1840, he was the son of a British immigrant widower. Sumner the younger saw in his father a symbol of the honesty, thrift, and realism of the British middle classes. His ‘Forgotten Man’—the hard-working taxpayer forcibly enlisted by the governing elite to pay the bills of the indigent—is still a central concept in libertarian economic circles. Sumner’s analysis of what social classes owe to one another—in brief, nothing at all except mutual adherence to law—is a brilliantly formulated attack on radical leftist group-identity theories.

Less well known these days is the fact that Sumner invented the concept of “ethnocentrism.” This he defined as “the technical name for this view of things in which one’s own group is the center of everything, and all others are scaled and rated with reference to it.” In Sumner’s framework, the natural tendency for every social group is to see its own norms as self-evidently superior to others.

Sumner considered patriotism the modern, civilized version of ethnocentrism: “for the modern man patriotism has become one of the first of duties and one of the noblest of sentiments. It is what he owes to the state for what the state does for him, and the state is…a cluster of civic institutions from which he draws security and conditions of welfare.” Sumner was sensible to the fact that this attitude of in-group loyalty and pride could overreach into harmful extremes—this he labeled “chauvinism.” But, he continued, all groups require in-group loyalty in their members. Any that did not effectively produce such loyalty would collapse in the struggle against other groups.

There were two intimately related questions at the heart of early American social science, and Sumner contributed complex, compelling answers to both. The first question was what should be seen as the most important cause of human behavior: biology or culture. The second was whether or not it was possible to rank cultures hierarchically. Sumner insisted that all humans share basic needs that result from our nature as living organisms facing challenges posed by the natural environment. We must discover means of extracting from the world the resources required for our survival and flourishing. Basic patterns of survivalist action, which Sumner called “folkways,” emerge to meet those needs. These folkways, and the more elaborated moral categories built on top of them (which he called “mores”), are relative to a given society. But they are also all rooted in fundamental facts about human nature. They can therefore be ranked according to the results they yield in their capacity to provide for human needs. All might be recognized as clever and inventive ways to respond to the challenges of life, but some respond more effectively than others.

Sumner would have laughed at the multiculturalist who claims that the horticultural tribes of the indigenous Americans or Africans were as well-endowed to meet these basic needs as the culture of the modern West. And similarly, within the America of his day, he did not doubt that the groups which valued and practiced asceticism, thrift, and productive labor achieved as a consequence greater freedom, more power, and higher rank than those groups which did not. There is no reasonable way this could be called unjust. Sumner was a careful student of non-Western cultures, and he recognized the excellence of many of them. But the idea of a society with no dominant culture, only a plurality of equally valuable and viable sub-cultures, would have appeared nonsensical to him.

In today’s world, it need hardly be said, ethnocentrism has become an epithet that one uses only to slander others as insufficiently “woke.” The path from Sumner’s usage to the contemporary goes directly through the work of another early 20th-century social scientist, Franz Boas. In contrast to Sumner’s conservative laboring class origins, Boas was born in Westphalia in 1858 to secular Jewish parents who typified the well-educated European liberal of the mid-19th century. They had shed the religion of their ancestors entirely (“broken through the shackles of dogma,” in his words) and Boas wrote of growing up in a “German home in which the ideals of the Revolution of 1848 were a living force.” From an early age, Boas was drawn to questions of what today would be labelled “social justice.” He bore scars from duels fought over perceived anti-Semitic slights. He was an early champion of the notion, not fully developed until the late 1960s, that African history was as great and glorious as the histories of Asia or the West. Presented at the invitation of W.E.B. DuBois in 1905 to a black audience at Atlanta University, the radicalism of his views stunned his American host, who would himself later convert to full-blown Stalinism.

The then-dominant mechanism in anthropological science for explaining distinctions in human populations was race, broadly assumed to be a biological aspect of human difference. Boas rejected it altogether and aimed to reorient anthropology on a culturalist base. He was not, however, a multiculturalist in the contemporary sense of the term. He believed the social inequality of non-white peoples in the US, specifically blacks and native Americans, would be solved ultimately not by the multiculturalist principle of emphasizing and celebrating cultural difference, but rather through intermarriage and the eventual disappearance of the country’s ethnic and racial pluralism. The mixed-race America that would result would leap over the problem of racial and ethnic conflict by erasing the relevant differences.

It took two of Boas’s students, Ruth Benedict and Margaret Mead, to push the positions of their teacher to greater extremes. Benedict’s Patterns of Culture, published in 1934, attacked the “nationalism and racial snobbery” that she claimed had emerged in response to the increased intercultural contact of modernity. The threat of xenophobic nativism would need to be rebuffed by “individuals who are culture-conscious,” i.e., cultural relativists. Benedict begins with a presentation of cultural relativism as a research method in anthropology. It is scientifically inappropriate, she argues, to go about the study of a culture other than one’s own from what Sumner would have called an ethnocentric perspective. The trick is to avoid “preferential weighting” of cultures, as the proper understanding of human culture is impossible unless one rejects the very notion of cultural superiority.

This perspective enables us to see that Western culture has succeeded not because of any substantive superiority, but only because of “fortuitous historical circumstances.” Sumner, it is to be understood, is right that ethnocentrism is the natural attitude of humans. But he is incorrect in assuming that such an attitude can have any positive consequences whatsoever. Ethnocentrism, the love of one’s own culture above others, is a kind of mental disease, a wholly negative trait that must be overcome not just by professional anthropologists but by all truly civilized human beings.

A year later, Mead’s book Sex and Temperament in Three Primitive Societies shook up middle-class American readers still further by pouring boiling acid on one of the most basic aspects of human nature: the sex difference. In a study of three New Guinean societies, Mead claimed to have found stupendous variation in sex roles that proved biology a non-factor in the face of the superior force of culture. In one of the societies she described, both men and women showed low levels of aggression; in another, both sexes were highly aggressive; in the third, women were more aggressive than men. Culture has the final say, and it can say whatever it wants, purported biological realities be damned. Human nature for Mead was “almost unbelievably malleable.”

By mid-century it had become de rigueur in anthropology to assert that there was no human nature and that culture was the unique cause of human behavior. Everything man is “he has learned, acquired, from his culture,” wrote the anthropologist Ashley Montagu in 1973.

The basic idea that emerged from this intellectual transformation of anthropology was that human cultures are both infinitely varied and equally viable. It is no small matter that neither of these claims is true. Human culture does vary significantly, but there are a wide range of features that all human cultures share. The anthropologist Donald Brown compiled a 10-page list of such universal features in 1991. They include, for example, the admiration of generosity, male domination of the political realm, and a preference for one’s own children and close kin.

It is not a simple matter to rank cultures, and careful attention must be paid to how environmental contexts affect whether a culture is productive or not in a given situation. Nonetheless, the evidence of the objective rank of cultures is, as Sumner argued, in broad relief given by the history of human time on the planet. Western civilization emerged as dominant in the world in part because of the fact that it was Europeans and not the Chinese who fell upon the incredible resource pool of the New World. But much of the explanation for why the former and not the latter got there first has to do with cultural facts. Protestant Europeans embraced a religious worldview in which productive labor had a positive, even spiritual valence it did not have elsewhere, and this enabled the rise of market societies.

A non-judgmental attitude may well be of use as a methodological tool for the ethnographer, but the problems with full-blown cultural relativism as moral and political philosophy are difficult to overlook. Some cultures, for example, practice female genital mutilation, which in some cases involves the complete removal of the clitoris, as a rite of passage. Though there are professional anthropologists who endeavor mightily to rationalize this practice, it is a hard sell to anyone not fully indoctrinated into that esoteric professional belief system. Most of us have little trouble recognizing that such practices mark these cultures as morally inferior to our own, which recognizes girls and women as meriting the same sacred respect that boys and men receive.

The ‘60s

However problematic cultural relativism becomes when seen outside the pure realm of ethnographic methodology, it has had tremendous influence on our way of thinking about American cultural identity. The radicals of the 1960s took up the work of the anthropologists of the ‘30s. They worked eagerly to turn academic doctrines into broad political platforms.

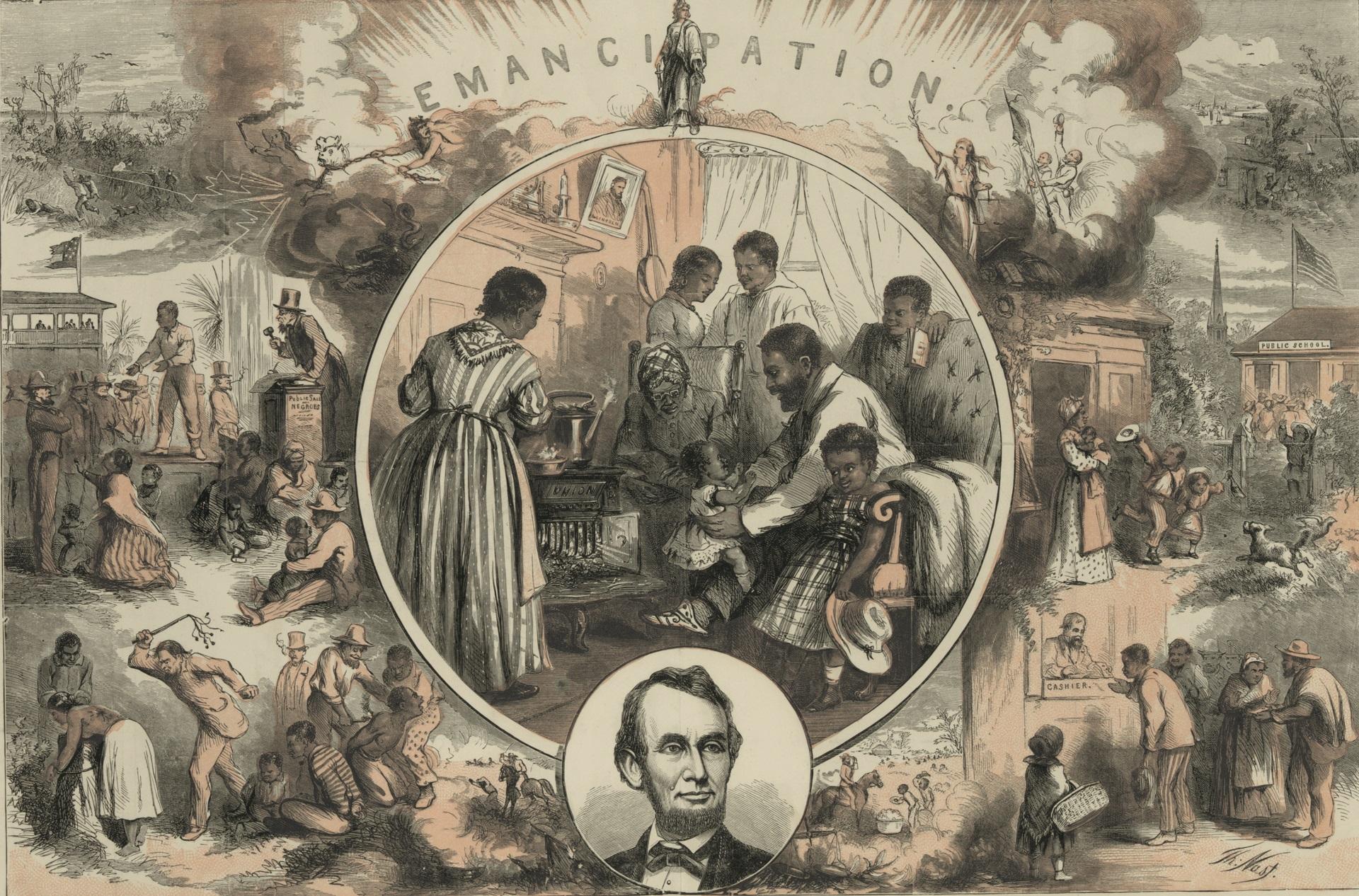

It was within the Civil Rights movement that this effort became most strenuous. Up through the mid-1960s, the dominant figures and groups of the movement still called for racial redress within a framework that assumed American patriotism was still possible. The movement’s very greatest political successes—the Brown v. Board Supreme Court decision, the Civil Rights Act of 1964, and the Voting Rights Act of 1965—were a consequence of its adherence to what Sumner would have called an ethnocentric set of cultural values. The values of individual right and equality under the law, which are at the core of the American project, were to be embraced enthusiastically and fully as our culture, superior not only to other competing cultures but also to our failed practice with respect to race relations prior to the mid-20th century.

But, as the assassination of Martin Luther King Jr. was cheered by the Black Panther Party in the person of Eldridge Cleaver, so too the dominant voices in the later Civil Rights movement denied that a unitary American culture should be celebrated, or even that it had ever historically existed. The neat suits and ties of student protestors in the early ‘60s were replaced by dashikis and Kente cloth, and the adherence to deep American cultural values was thrown overboard for Black Power and pan-Africanism. At San Francisco State University in 1968, a coalition of radicals in the Black Student Union and a group calling itself the Third World Liberation Front led a five-month campus strike, during which there were pitched battles between protestors and San Francisco police. The goal was, in the words of a San Francisco Chronicle writer, to forcibly impose “changes to the Eurocentric prism through which their education was being processed.”

The end result was the nation’s first Ethnic Studies College, from which divisive far-Left ideologies based on contempt for historical America would be imparted to students. Allan Bloom, in a late chapter in The Closing of the American Mind, gives a bracing account of a similar process at Cornell in 1969. In this case, radicals armed with rifles seized control of parts of campus.

Too many have forgotten how militant the Civil Rights movement then became, and too many fail to comprehend how effectively its ideas have been mainstreamed. One of the points in the Black Panther Party’s Ten Point Program had to do with the immediate release of all black inmates from jails and prisons on the grounds that they were all by definition political prisoners in a racist society. Many civil rights radicals were by the early 1970s positing that black prisoners were the population most oppressed by white racism and therefore most in need of political aid. At the vanguard of the movement were criminal thugs masquerading as activists.

One such radical was George Jackson, whose book Soledad Brother made him a celebrity until his death during a prison escape attempt, in which he murdered three guards and severely wounded three more. Another such activist—Joanne Chesimard, now known as Assata Shakur—participated in the murder of a peace officer. Convicted of the crime and subsequently smuggled out of prison to Cuba, Shakur is now revered by Black Lives Matter as a founding inspiration. The prison abolitionist movement, extremist in the early ‘70s, is today thriving within the academy in myriad courses on “race and imprisonment.” It is expounded in New York Times best-selling books such as Michelle Alexander’s The New Jim Crow and Bryan Stevenson’s Just Mercy.

But what most aided the advance of radical multiculturalism was a single piece of legislation. The 1965 Immigration and Nationality Act abolished previously existing quotas designed to stabilize the ethnic makeup of the US and limit the number of unskilled laborers entering the country. The immigrant pool thus changed from its original composition, largely drawn from populations that were culturally close to the existing American society, to a new assemblage of groups coming from much more culturally diverse locations in Africa, Asia, and Latin America. As this shift occurred, the anthropological doctrine of cultural relativism was readily seized on as explanatory tool and political justification. The creation of a Diversity Lottery visa and new, much more liberal rules on admission of refugees and asylees ensured even more grist for the multiculturalist mill.

Predictions by the ’65 bill’s sponsors—that the uptick in immigration spurred by the new policy would be brief and quantitatively unimpressive—proved egregiously incorrect. The new wave of immigration has had a transformative demographic effect. Whether one approves or disapproves, it is undeniable that we are now in a truly new situation. We have had high immigration levels, and from historically new points of origin, in the past—for example, around the turn of the 19th into the 20th centuries. But such periods have always previously been followed, after a few decades, by a moratorium on new immigration and intense efforts to assimilate the recent immigrant populations. We are now more than a half-century into a massive new wave, culturally and ethnically more diverse than any other in our history, and there is still, even in the wake of the 2016 election, precious little sign of any real and widespread political will to slow it or to assimilate our new arrivals. Thanks to multiculturalism, that will has been utterly lost.

Multiculturalist Ethnocentrism

The early incarnations of multiculturalism in educational institutions made use of the empirical fact that, in large urban areas at least, 1960s America was already characterized by a significant amount of demographic diversity. Given this, it was argued, what harm is there in revising curriculum to reflect that and provide students an accurate view of the lay of the land? In the Detroit school system, for example, “Dick and Jane” readers set in the suburbs were replaced with materials showing individuals of varying races living in downtown city centers.

But mere description of existing diversity was soon no longer enough. Multiculturalists began to argue for curricula that would advocate diversity as a good in itself and portray the existing dominant American culture as benighted and racist.

One notes with some poignancy that Sumner has at the end of the day been vindicated. The program of cultural relativism as an equalization of cultures, as a position in which no hierarchy among cultures could be established, failed utterly. In academic anthropology, the norm these days is ethnographies of non-Western cultures as straightforward advocacy pieces for those cultures, filled with observations about how superior such cultures are to our own. In the political sphere, it is even more evident that Sumner was correct in identifying ethnocentrism as our natural attitude. The multiculturalist program of the ‘60s and ‘70s did not create a plurality of different and mutually respectful cultures. It produced a new hierarchy instead of a unified American culture based on European moral and political philosophy and Anglo-Protestant religious values.

Sumner was right. “Us versus Them” is apparently a preset feature in humans, very difficult to turn off completely and easily invigorated by myriad commonly encountered social situations. The advantage of the old nationalist way of doing this in America is that it allowed for integration of differing groups under the common patriotic aegis. The national identity bound many substantively different cultural groups into a coherent “Us,” whereas only those outside of this American grouping counted as “Them.” We now have built an extremely aggressive, destructive form of “Us versus Them” into the very warp and woof of our most fundamental institutions, and we train our young in its caustic doctrines. Where such a game ultimately ends is a question that we rightly contemplate with great trepidation.

The American Mind presents a range of perspectives. Views are writers’ own and do not necessarily represent those of The Claremont Institute.

The American Mind is a publication of the Claremont Institute, a non-profit 501(c)(3) organization, dedicated to restoring the principles of the American Founding to their rightful, preeminent authority in our national life. Interested in supporting our work? Gifts to the Claremont Institute are tax-deductible.

Revisionist Black supremacist history can't grasp our shared equality.

His craven impeachment vote reminds us morality isn’t a matter of "feeling." It’s a matter of truth.

Time travel of every kind is madness.

Recovering the world-changing triumph of America.

Why African Americans should be very, very mad at the Democratic Party