It wouldn’t preclude a large degree of online anonymity.

Welcome to Online Censorship 2.0

Reach suppression is happening right now.

All translations from German were done by the author.

A recent ruling by a German court at first glance could be seen as a victory for freedom of speech. But on closer inspection, it shows why so-called visibility filtering—artificially restricting the reach of online content rather than removing it outright—is the future, and indeed increasingly the present, of online censorship.

As reported by the German alternative media Nius in February, a court in Wiesbaden acquitted defendant Sebastian W. of the charge of having “insulted” Germany’s then-Economics Minister Robert Habeck. (“Insult” is a crime in German law.) In a July 2024 tweet, Sebastian W. referred to the German minister as a “traitor.” Under Section 188 of the German Criminal Code, which is commonly known in Germany as the lèse-majesté law, the penalties for insulting a public official are greater than those for insulting an ordinary citizen.

In another highly publicized case also involving Habeck, German retiree Stefan Niehoff had his home raided in November 2024 merely for having retweeted an obviously satirical tweet that jokingly referred to Habeck as a “professional moron.” (On the Niehoff case, see here, here, and here.)

Much of the ostensible “hate speech” prosecuted under German law consists not, say, of speech considered to be racist, sexist, or homophobic, but merely of personal insults. Hence, much of the speech that German sources reported to online platforms under the Digital Services Act (DSA), the E.U.’s flagship online censorship law, undoubtedly consists of such insults as well. The activities of the German organization HateAid, which enjoys the status of an officially certified “trusted flagger” of illegal content under the DSA, make this clear.

Under the DSA, European sources may flag any online content in any language posted anywhere in the world. A German organization like HateAid may not only flag German content for removal—it can also target Americans.

Thin-Skinned

The tweet for which Sebastian W. was charged was ironically part of a larger discussion of insult complaints, which included reference to HateAid.

The main tweet, which was titled “Urgent Warning and a Couple of Tips on Insult Complaints,” began as follows:

The business model of some politicians and suchlike is to bring charges against you, even without incurring any risk thanks to foundations [sic] like HateAid. Thus, politicians use our tax money to bring charges against us without incurring any risk, great.

To this, Sebastian W. replied, “Let’s see what networking can accomplish.” He then referred to a lawyer who is known to have represented Habeck and who, Sebastian W. said, is present on X with three different accounts to “case-hunt.” “Absolutely block,” he continued, “anyone who knows other hack lawyers of other traitors can share, for the purpose of blocking, under the comment.”

Sebastian W. appears not to have been charged for the description of the lawyer, but only for alluding to Habeck as a “traitor.” Why was he acquitted? Section 188 protects public officials only against “insults” on the condition that the “act”—that is, the insult—is such as to “substantially impede” the performance of the official’s public duties.

As Sebastien W.’s lawyer, Melina Schwendenmann, explained to Nius, the court found that while his tweet did indeed constitute an insult of a public official, it did not satisfy the stated condition given its limited reach. The reply had been viewed merely 1,245 times. The court found that this was not sufficient reach to impede the performance of Habeck’s public duties, and hence to justify a conviction.

Et Tu?

The ruling is highly significant for the application of the DSA, which requires online platforms to remove illegal speech, at least in the jurisdiction where it is illegal. (Given the complexities and costs involved in tailoring content moderation to different markets, this requirement can often result in global takedowns.) But the law also explicitly permits platforms to meet their DSA obligations not by removing content outright, but by restricting its visibility and hence reach.

This idea was popularized by Elon Musk in November 2022 when, shortly after completing his acquisition of Twitter, he announced a new policy of “freedom of speech, but not freedom of reach.” But long before Musk adopted it, European Commission officials were already using the freedom-of-speech-is-not-freedom-of-reach mantra to try to reconcile the obvious censorship the DSA requires with the freedom of expression that is, after all, guaranteed by the E.U.’s own Charter of Fundamental Rights. (See Věra Jourová’s December 2021 remarks to Politico, where the E.C.’s then-vice-president for values and transparency uses the same expression.)

In theory, visibility filtering or reach restriction is supposed to be reserved for speech that is not per se illegal, but is still deemed socially harmful. Hence, it would be illegal under the DSA itself for platforms to allow it to proliferate. Such “legal but harmful” speech essentially refers to alleged mis- or disinformation. The suppression of “illegal hate speech” and “harmful disinformation” represent the two main pillars of the DSA’s censorship system.

But the ruling of the Wiesbaden court shows that reach restriction, given the above-mentioned condition, can in fact make otherwise illegal speech legal by eliminating the harm, or ostensible harm, entailed by its wide dissemination. As we have seen, this applies to Section 188 of the German Criminal Code regarding insulting public officials.

It also applies, however, to Section 130, Germany’s “incitement” law that prohibits “hate speech” in the more usual sense. This is because Section 130 also involves what we could call a “publicity condition.” Incitement is illegal only on the condition that it occurs in such a way as to represent a threat to public order. Incitement that falls on deaf ears, so to speak, represents no such threat. Ensuring that such speech is not heard, or is at most only barely audible, is precisely what artificial reach restriction is designed to bring about.

Censorship, 2026 Style

The American public debate about online censorship remains almost entirely mired in the assumption that such censorship essentially involves content removal and account suspensions. But this is a very 2020 assumption that no longer corresponds to the reality of online censorship.

Major platforms’ regulatory submissions to the E.U. show that visibility-filtering is already the predominant mode of censorship they use to remain in compliance with the DSA. For instance, data included in X’s April 2024 DSA Transparency Report shows that the platform took action on 226,350 items of content reported to it under the DSA during roughly the prior five months, with 40,331 being globally deleted and 62,802 geo-blocked in the E.U. This means, however, that 123,217 items, or nearly 55%, “merely” had their reach restricted in keeping with the FOSNR (Freedom of Speech, Not Reach) enforcement philosophy that is also touted in the report.

Unfortunately, these aggregate figures were not included in X’s subsequent DSA transparency reports, making it more difficult, if not impossible, to infer the exact extent of visibility filtering in the platform’s DSA enforcement mix.

But other platforms are not so mysterious about the extent of their visibility filtering. Meta’s August 2025 DSA Transparency Report for Facebook shows that its response to alleged misinformation consisted almost exclusively of visibility filtering, or what Meta—and, incidentally, also the DSA—describes as “demotion.” This report explains that demotion “refers to an action that we may take to reduce the distribution of content.” Nearly nine million items of content containing alleged misinformation had their distribution restricted during the six-month reporting period. For what it is worth, Meta claims to have had all such restricted content fact-checked.

Following a change in reporting requirements, the most recent set of DSA transparency reports now essentially consists of raw spreadsheet data presented according to a standard template. The data for X suggests that the proportion of visibility filtering in the platform’s DSA enforcement mix has, if anything, increased since mid-2024. But deciphering that data would require an article in its own right.

However worthy their intentions, American defenders of free speech who continue to focus on content removal and account suspensions are missing the plot about the online censorship regime that has coalesced under the pressure of the E.U.’s Digital Services Act. Content removal and account suspensions were censorship 1.0, while 2.0 is visibility filtering.

In an August 2023 interview with CNBC, then-X CEO Linda Yaccarino spoke of content that she famously termed “lawful but awful”—a dumbed-down version of the E.U.’s “legal but harmful”—and cheerfully admitted that the platform would make such content “extraordinarily difficult to see.” But what, after all, is the difference between “extraordinarily difficult to see” and invisible? The line is a thin one indeed.

The American Mind presents a range of perspectives. Views are writers’ own and do not necessarily represent those of The Claremont Institute.

The American Mind is a publication of the Claremont Institute, a non-profit 501(c)(3) organization, dedicated to restoring the principles of the American Founding to their rightful, preeminent authority in our national life. Interested in supporting our work? Gifts to the Claremont Institute are tax-deductible.

Racism is defined at the discretion of the regime.

The Left’s support for civil liberties has always been contingent on its own relative strength or weakness.

Competing visions of the purpose of free speech.

Radical Left currents run deep here at home.

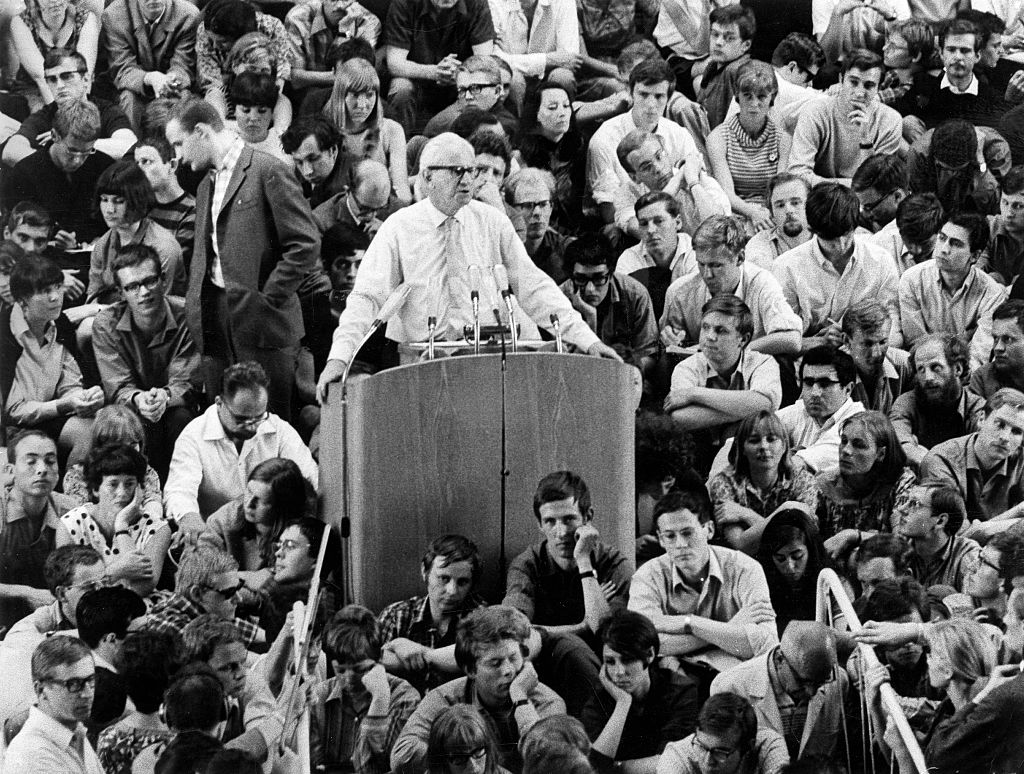

The speech heard ‘round the world.