The foundational truths are written into our experience. We just have to remember them.

Throwing Down the Scientific Gauntlet on Natural Rights

A Response to Glenn Ellmers and J. Eric Wise

“Tell me, o Socrates: can human excellence be taught? And if it cannot be taught, can it be learned? And if it cannot be taught or learned, can it be acquired in some other way?”

Aristotle’s attempts to answer this and similar questions undergirded much of Western thought for many centuries. Where Plato called us to step out of the cave of shadows into the realm of unchanging and eternal mind (nous), Aristotle told us to look at ourselves and the world. To use our reason and deduce principles. To separate out causes. To see the ordered structure of the world and of ourselves.

It was a powerful message. But today it founders precisely on the results of looking closely at our world and ourselves. Those who would argue for a polity and a society grounded in natural rights are also failing precisely because they have not absorbed what science and mathematics have uncovered in the last century or so.

I’ll limit myself here to responding to the recent essays by Glenn Ellmers and J. Eric Wise, as they directly argue from an Aristotelian framework for natural rights.

The lessons I would cite can be summed up with three themes: the failure of deterministic categories and logic, the increasing prevalence of complex adaptive systems in our lives (especially those that are networked), and the meta-nature of humans. None of these contradicts an assertion of natural rights—but each one demonstrates the need to modify older intuitions and arguments regarding the human nature on which they are grounded.

The Failure of Deterministic Categories and Formal Logic

In 1955 four highly-regarded researchers proposed an in-depth summer project at Dartmouth. John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon expected to make considerable progress in a few months toward artificial intelligence. Their approach was to “proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

That confidence rested on decades of work in formalizing mathematics and logic. Axiomatic systems with clearly defined terms that yield logical proofs of theorems were, it was thought, the very essence of mathematics. Key to the effort was Aristotle’s Law of the Excluded Middle: a thing is either A or NOT A—it cannot be both.

All mathematics, Russell and Whitehead had proclaimed in their Principia Mathematica, could be reduced to set theory, and set theory to formal logic—to pure formalism, and the rationality of mathematics in the face of non-Euclidean geometries and transfinite numbers could thereby be reclaimed.

They failed. Kurt Gödel showed that there is always implicit assumed content in axiomatic systems, no matter how simple the topic. Later, Alfred Tarski would propose the use of meta-logics—axiomatic systems that describe the ground rules to be followed by a given formalization. He acknowledged that this created a potentially infinite regression of meta-meta-etc. that ultimately ended in human intuition and sensory abilities.

My Aristotelian friends will recognize the issue. But Tarski’s approach, seminal to the development of computer science and all modern technology, does not rest on an Unmoved Mover.

Meanwhile, the 20th century saw the rise of Einstein’s relativity and then the more challenging insights of quantum mechanics and today of quantum information theory: insights that challenge the assumption that what makes sense to human rationality obtains at all levels of reality. Instead, so far as we can tell, at the heart of the world we occupy (and are part of) lies probabilistic uncertainty that can only itself be grasped through the equivalent of meta-natural laws.

And thus Wise’s claim that mathematics is all about units and rests in its nature on our intuitions and reasoning about objects around us, fades in the face of the proven inadequacy of both our intuitions and reductionist attempts at empty formalism to produce a fully satisfactory mathematics.

But with the development of binary logic gates and hence of electronic computers, the lure of reducing phenomena to ones and zeros, to true and false assertions, proved irresistible. Indeed, it’s also often quite useful. If I am tracking product sales, or keeping a mailing list of potential voters, or calculating changes in species density in a given ecosystem, computers do quite nicely. They coincide with our intuition and reasoning abilities, if we don’t ask hard questions.

Questions like, what economic measures are we collecting, and do others tell a different story about the effects of policies? Or like: what is gender and how does it relate to biology?

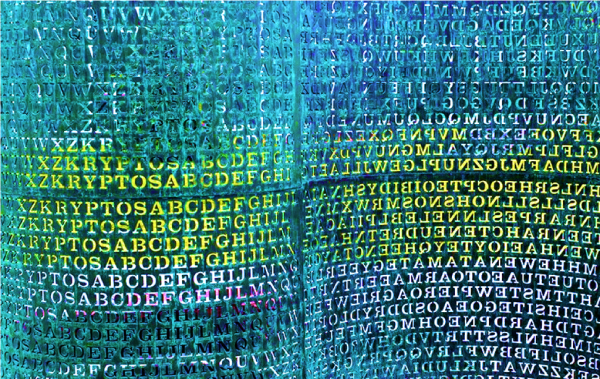

Moreover, binary logic gates, ones and zeros, are not the only paradigm for computing now. Quantum methods are showing significant potential for a variety of applications from encryption/decryption of information to rapid, deep machine learning on massive amounts of sensor and other data. It’s no coincidence that most A.I./machine learning work of the last few decades has therefore consisted, not of building logical implication systems, but of emulating how we see, move, grasp objects, and quickly respond to events. That’s the easy part.

How shall we respond to Tarski then? If every human attempt to fully articulate the content and meaning even of highly abstract mathematical concepts fails, what then should we say in response to Glenn Elmmers and his use of various categories to argue for the obvious teleological purpose of humans and reason?

Aristotelians have not absorbed the very strong evidence for such human characteristics as graded salience and embodied human cognition, key elements in how we think and speak with language. Graded salience work by Rachel Giora, Istvan Kecskes, and others shows that we develop concepts and their representation in human language over time, that these shift with personal experiences and in social contexts, and that they shift even with a given individual as a result of such things as learning to speak a new language. Our use of language always incorporates private content.

And embodied cognition notes the evidence that for all but highly abstract concepts we process ideas and language though partial simulation of specific sensory stimuli and associated emotional responses rather than through disembodied, idealized reason. Which sensory and emotional content is most salient for you or me will differ between us, and differ within us over time.

Language and thought, heavily intertwined, are therefore meta, precisely in Tarski’s sense, rather than fixed—while also being shaped heavily by contingencies and by genetic and other differences between us. So the challenge for Ellmers and others is to identify what it would mean for a meta nature to have a telos that is in some sense unchanging.

Complex Adaptive Networked Systems

But what about those highly abstract concepts, like justice? Again the fMRI and other evidence strongly suggests that they are both represented and processed as the emergent properties of the human neural system in what is technically known as a complex adaptive networked system.

Such systems are made up of many many elements and their interactions. ‘Adaptive’ means that the elements change their behavior depending on what is happening around them. ‘Networked’ means that ‘around them’ includes relationships with elements that might be physically distant but that influence actions nonetheless.

A key characteristic of complex adaptive networked systems is that they have system-level behaviors that cannot be predicted by extrapolating from the behaviors of the elements. Thermodynamics equations accurately predict the results if I heat a sealed container of air or water by a given amount, despite the very large number of molecules inside. But there are no such equations that accurately capture important system-level behaviors in networks like the human brain/body system, economies under conditions of major technological disruption, or the collapse of communities in ‘flyover country’ in the face of economic, social, cultural, and environmental corrosion.

The whole is sometimes much greater than the sum of its parts. How, specifically, should we argue for the telos of a complex adaptive networked system like our embodied selves? In what way is such a telos sufficiently concrete to form the basis for natural rights?

You and I as people are, each of us, highly complex adaptive systems. This is true physically, emotionally, and especially cognitively. And we participate in a plethora of larger such systems, many with global reach mediated by technology.

Nature, But of a Meta Sort

Humans have, therefore, meta-natures. These meta–natures are shaped but not fully determined by our genetics and the nutrition we received in the womb, by the concepts we began to form as we were taught to use language to experience and think about our surroundings.

If we grew up in a Western culture we apply meta-concepts like agent, action, and the object of an action. If we grew up in an Asian culture we learned to see spatial relations between the elements in a picture as the primary truth it represents, a perspective that later extends to judgements about the primacy of society vs. the individual.

Contra Wise, this does not happen by a baby forming his own conclusions. The very language used in different cultures seeds significant differences in the most basic of concepts. And those concepts in turn shape subsequent experience and its interpretation. There’s a deep cyclical and networked set of interactions that lead to a child in a given culture developing a given framework about the world.

What is the argument for natural rights when so fundamental a notion as human agency acting on entities separate from oneself has deeply different emphasis in different cultures? Must one say that only a certain culture is appropriate for articulating or defending such rights?

This is both the great challenge facing the attempt to reassert natural rights as the basis for just governance, and the possible path to success in that reassertion.

A Path Forward

The argument for natural rights must come to grips with the serious limitations of Aristotelian categories and logic if it is to persuade those familiar with (or simply experiencing the result of) the last few centuries of science insights. While an advance at the time of classical Greek thought, Aristotle’s conclusions have deep flaws. If we take Aristotle seriously we should pay attention to what we’ve learned through observation and replace those conclusions.

An argument for natural rights can, however, be powerfully made if it invokes precisely those scientific insights. Graded salience and embodied cognition underscore the enormous impact of nutrition, parenting, and the narratives that pervade schooling; more broadly for the intertwining of a culture and individuals that does not erase the distinction but definitely blurs the boundary between them. Network science makes it clear that issues of economics, trade, and what pronouns we use do not exist as standalone, abstract principles but are instantiated in the context of layers of community connections—the Achilles heel of free trade in an era of rapid globalization, as one example.

Emphasizing the meta status of human nature addresses the conundrum of human equality vs. the evidence for significant differences among us. It provides a context for making principled arguments about polity and policy, challenging the simplistic relativism of post-critical thought and politics. But it also presents those who would argue for natural rights with a significant challenge.

It will be hard work. Is the Artistotelian natural rights community up to the task?

The American Mind presents a range of perspectives. Views are writers’ own and do not necessarily represent those of The Claremont Institute.

The American Mind is a publication of the Claremont Institute, a non-profit 501(c)(3) organization, dedicated to restoring the principles of the American Founding to their rightful, preeminent authority in our national life. Interested in supporting our work? Gifts to the Claremont Institute are tax-deductible.

Notes on nature’s means to nature’s ends.

What is the Bedrock of Being? (A Reply to Alex Priou.)

Must the natural scientist become a political philosopher?

What If Rights Have Gone Extinct?

There is no logical way to dismantle the foundations of logic.