It’s time to bet on humanity—before things get worse.

What Makes Experts Special?

We’re the ones to decide on whom we should rely.

The coronavirus pandemic has thrust the issue of scientific expertise to the fore of our public debates like never before. Coming on the heels of widespread popular discontent, our current crisis makes plain how central scientific expertise is to public policy, leading some to herald the end of populism. Yet, the government’s response has also inflamed popular anger with the expert class. And experts, for their part, have hardly remained above the fray. Over one thousand public health and medical professionals recently signed an open letter expressing support for “protests against systemic racism,” drawing the ire of many conservatives, who object that only a few weeks ago these same experts opposed protests against lockdowns for public health reasons.

Does coronavirus vindicate the importance of scientific expertise and its institutions? Or does it offer yet more evidence of the dangers of scientific elitism? We have, once again, divided into warring factions, with one’s attitudes about “the experts” playing proxy for partisan affiliations.

Underlying this blistering culture war is a surprisingly philosophical problem: Whether, when, and how we should rely on scientific experts. It is a concrete instantiation of the broader tension between science and democracy—or the more ancient one between knowledge and politics. But before considering such abstruse questions, it may be worth pausing to consider some more homely ones. In fact, the issue of relying on scientific experts is a special instance of a much more familiar one: relying on others.

Relying on Others

Of course, we rely on others all the time; much of daily life depends on it. When your spouse tells you that he’ll pick up the kids from school, or your colleague says she’ll complete a project on time, you generally don’t subject their promises to withering skepticism (although you might follow up). Similarly, when your neighbor tells you that trash day was moved from Wednesday to Thursday, or your friend informs you that the get-together has been postponed, you typically don’t assume they’re lying or even that they’re wrong. Unless there’s a good reason not to, we often assume that others are dependable enough. That’s not the same thing as assuming that others are infallible—your neighbor could be wrong, your friend misled—or being indiscriminate. If we have good reason to think that someone might be wrong or unreliable—I’m more apt to trust the testimony of my wife than of my three-year old, and to discount the testimony of a known liar or someone who clearly has no idea what he’s talking about—we adjust our expectations accordingly.

It’s not just family, friends, and neighbors. When I drive on the highway, I have to assume that most of the other motorists are relatively competent and careful and more or less law-abiding. That doesn’t mean that I shouldn’t be vigilant and prepared in case someone makes a mistake or does something reckless. When I travel, I rely on the train conductor or bus driver or pilot to not only possess the relevant skills (including how to operate the relevant technologies) but also to use good judgment. This, of course, doesn’t mean accidents never happen, whether through human or technical error or negligence. As these last examples suggest, things get a bit more complicated when we have to rely on strangers, especially those who possess knowledge or skills we don’t have. But the general lessons still apply.

When I go to get my car tuned up or my teeth cleaned, my relative lack of know-how renders me dependent on the car mechanic or the oral hygienist—but not necessarily passive. Most people don’t (or don’t know how to) tune up their own cars, much less their own teeth. Still, I might know enough about cars—or people—to know when I’m getting the runaround. I have opinions about what I can afford, what I need, and what seems reasonable. I may also simply have different goals in mind or access to other information (e.g., about when I plan to buy my next car) that may influence my decision. Finally, I have a choice about which car mechanic or oral hygienist to go to in the first place. Having some familiarity with the relevant expertise may help in making my selection; but I’m likely to rely most heavily on evaluative criteria available to everyone: Does this person possess the right skills and experience; is she well regarded by her professional peers and customers; does she seem trustworthy? I may also rely on a professional or personal recommendation. It goes without saying that none of these methods can guarantee a good outcome or prevent human error.

In short, relying on someone else—whether he or she has a specialized skill set or not—means depending on that person’s knowledge, experience, and judgment in some measure. But it hardly eliminates the need for me to use my own. Is the situation any different when it comes to relying on scientific experts?

Relying on Scientists

Consider an example. Does the Earth revolve around the Sun or vice versa? We all know the answer—or do we? How many of us can follow, much less perform, the empirical and mathematical demonstrations required to prove heliocentrism? Most of us simply take it on faith—not blind faith, of course: We have good reason to believe that the demonstrations exist. What we’re doing, in effect, is trusting the authority of the physicists or astronomers (or, more likely, the teachers) who have told us it is so. This doesn’t mean that the physicists and astronomers are always right or that we should believe everything they say in every context. But we do generally trust that they have a pretty good idea what they’re talking about when it comes to their own fields, that they’re well acquainted with the techniques needed to demonstrate the conclusions in question, and that they’re not out to deceive us. This is true for most of us when it comes to most scientific knowledge. That includes even most scientists, who have not and would not have the time, even if they had the ability, to verify every conclusion of every adjacent field or subfield of every branch of science.

In fact, this pattern holds within scientific fields. As the historian of science Thomas Kuhn observed, science progresses through paradigms, which, once established, orient and guide research. No progress would ever take place if every scientist set out to prove (much less disprove) every prior scientific conclusion. That’s because, as Aristotle pointed out long before Kuhn, every science rests on principles that must be accepted before any demonstrations may be carried out, on pain of infinite regress. This doesn’t mean that those principles are taken entirely on faith or treated as dogma. Scientists accept conclusions that have been battle-tested, even while recognizing they may yet be revised or even thrown out altogether. In so doing, the scientist, though more knowledgeable than the layman, does not behave altogether differently: he relies on the knowledge, experience, and judgment of his colleagues and predecessors, without forfeiting his own.

These examples might seem inapt. Many scientific findings—including the fact that the Earth orbits the Sun—don’t really impact my life one way or the other. (In fact, in daily life we often pretend the contrary, as when we say the sun “rises” and “sets”—even navigation and parts of astronomy rely on the sun’s apparent motion.) But what about when a surgeon uses an excimer laser on my eye to correct myopia or a dentist uses an X-ray machine to check for tooth decay? In both cases, I’m depending on the practical skill of the surgeon or dentist (or technician), but also upon our scientific understanding of electromagnetic radiation. The same goes for all kinds of technical expertise from pulmonary heart surgery and clinical psychiatry to meteorology and epidemiology.

In these examples no less than our more homely ones, we rely on others who possess knowledge and skills that we do not. (Note that the “we” includes everybody: The astrophysicist relies on the pulmonary heart surgeon or dentist no less than the service worker or car mechanic.) But that is not the same thing as blind dependence. Scientific experts can be wrong, biased, make errors of judgment, or practice deception; some are even outright frauds. Many times in our public life scientists are asked to make (and are perhaps too often willing to offer) pronouncements on matters far exceeding their areas of competence—areas in which they may or may not be any more knowledgeable than your next door neighbor. In short, when it comes to scientists or any other kind of expert, we should be discriminating about who to trust and when. Credentials can be useful for this purpose, but sometimes misleading; personal or professional recommendations may be helpful, but hardly failsafe. There’s no substitute for sound judgment, and no guarantee against human error.

Science as Techne

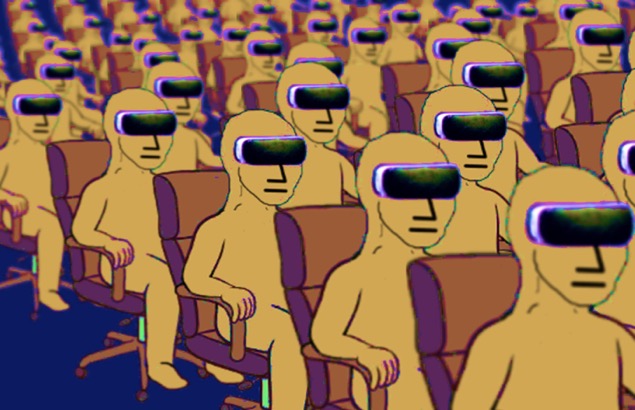

We tend not to think about scientific expertise this way, especially when it comes to our public life. Instead, we treat science as if it were a black box, inaccessible to the “laymen” who rely on it to mechanically produce results. And, depending on your estimation of the select few who do understand its inner workings—or, perhaps, your prior ideological commitments—you will either accept or reject what comes out of the box. Science, in other words, is identified with its outputs—a set of propositions extracted from scientific practice and dropped into the foreign territory of politics, where its survival will depend on the alleged hostility or openness of the political practitioners to evidence and reason.

In this way, as the French philosopher Henri Bergson once pointed out, we falsely analogize scientific inquiry to mechanized labor—as in the term “knowledge production.” Assembly lines produce things—readymade objects marketed to consumers who have little or no knowledge of the specialized skills required for production. By analogy, scientists are intellectual specialists who work collaboratively within a kind of cognitive assembly line to produce an object—expert knowledge—for consumers who do not or could not participate in its production. Scientific knowledge thus becomes a product to be consumed (or rejected) wholesale. And the lay public—whether the ordinary citizen or the political representative—is reduced to the role of passive consumer.

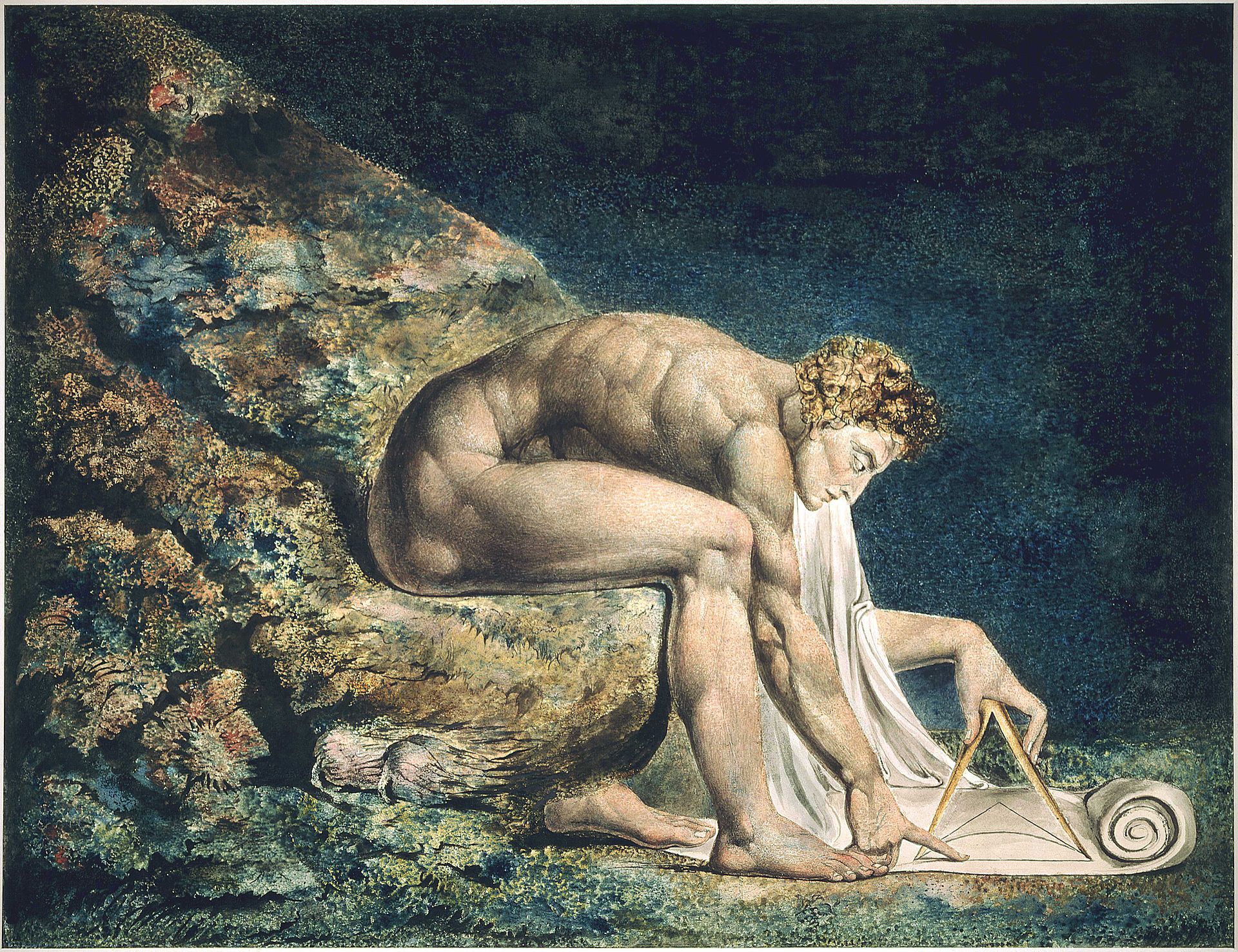

But, as Bergson notes, scientific inquiry is not a product so much as a capacity to seek and attain understanding within a given domain. This is why Aristotle called knowledge—or scientia, in Latin—an “intellectual virtue.” For him, a virtue is a habit, a settled disposition to behave in a particular way acquired through a lifetime of learning and practice. By extension, scientists, in our modern sense, are those of us who have cultivated the habits and skills—the intellectual virtues—needed to subject certain domains to systematic study, just as cobblers are those of us who have cultivated the habits and skills needed to make shoes, and so on, from car mechanics to musicians. In this sense, rather than mechanized labor, scientific inquiry might be better analogized to a craft—what in ancient Greek is called a techne.

What is significant about a craft is that it rests on what the philosopher Michael Polanyi called “tacit knowledge.” Tacit knowledge is a kind of savoir faire that cannot be easily encapsulated in words; it is practical rather than propositional—acquired through experience, sharpened through practice, and transmitted through apprenticeship. Philosophers, historians, and sociologists of science from Polanyi and Kuhn to, more recently, Harry Collins, have emphasized the “tacit dimension” of science. What the scientist gains first through education and apprenticeship and afterwards through experience is not only explicit knowledge—e.g., “the gravitational force between two objects varies inversely with the square of the distance”—but tacit knowledge: a facility with the techniques, skills, and instincts needed to practice his craft well, e.g., to have a nose for what kinds of questions are worthy of investigation, which statistical methods should be applied to a given problem, how to tweak an experimental apparatus to correct for certain kinds of error, etc.

As when learning a craft, the student learns science first through imitation. The work of an exemplary practitioner—a Galileo or a Newton—is treated, literally, as paradigmatic. This is what is going on when students are asked in physics 101 to rehearse Galileo’s famous inclined-plane experiment. They are given not just the hypothesis, but also the methods to employ, the experimental setup, and the intended outcome—all modeled on Galileo—and told to execute. (This is almost literally the opposite of the scientific method as it is often portrayed.) What the student is learning here is not propositional knowledge—“the rate of fall of an object is independent of its mass”—which can be gained simply through memorization, but rather how to become a practitioner. Pedagogically speaking, it is no different than teaching a student to paint by imitating great works of art. Expertise comes later when the student leaves school and begins to hone the knowledge and skills she has acquired through experience—when she begins to formulate her own research questions and methods of inquiry, to design her own experiments, and, ultimately, make her own discoveries.

To put the same point differently, scientific expertise is not simply information. It is not first and foremost an output but a habit, one that is acquired and perfected through practice. This does not mean scientific experts never err. On the contrary, what follows from this account is the ineluctable role in scientific practice of prudential judgment—the capacity to apply general knowledge to particular circumstances. This is what the scientist does, for example, when deciding if an empirical anomaly is a counter instance to a general theory—and a reason to throw out that theory—or simply something that that theory has yet to explain. This is also what the scientist does when appraising two rival theories that both cover the same facts, or when deciding whether to use a stochastic or deterministic model, say, in studying the outbreak of a new virus in a given population. To remain with a timely example: An epidemiologist must exercise judgment when deciding whether to use a model that assumes random mixing within a target population or not. This judgment may turn on a host of factors, including past experience, well-established theory, the specifics of the case at hand—including what is known or not known about the relevant demographics, what data are or are not available—and to what use the model will be put both within epidemiology and public policy.

However, once we recognize the ineliminable role played by prudential judgment within the practice of science, we can also see why error and disagreement are ineliminable. Prudential judgment is called for precisely in those circumstances where there is uncertainty, when we cannot simply follow a rule to get the right answer. Up close, science is a messy human affair like any other. Scientists are fallible people who happen to possess knowledge, skills, and experiences that most others in our society do not, and which are vital to modern life—in this sense, they are no different from car mechanics or plumbers or farmers or architects or financial accountants. Too often, though, we treat them instead as a class apart, members of a privileged group possessing knowledge distinct in kind from that attainable by mere mortals. But when we cordon off science from the human realm of judgment, error, and disagreement, we wind up treating it as oracular—and are inevitably disappointed.

Inescapable Others

What does all of this mean for whether, when, and how we should rely on scientific experts?

On the one hand, it means that it is perfectly rational and necessary to rely on scientific experts. And doing so is not simply a matter of trusting scientists’ conclusions so much as their experience and judgment. The political scientist Philip Tetlock captures this idea well when he says that “what experts think matters far less than how they think.” But this, of course, does not imply that our reliance on scientists should be uncritical or indiscriminate any more than our reliance on car mechanics or oral hygienists.

On the other hand, we must not hold scientists to a higher (or lower) standard than we do anyone else or any other group of experts. We have to give scientific experts room, so to speak, to practice their craft, while recognizing that they are human. Conversely scientists must be open and honest about the kinds of judgments they make, the assumptions built into their conclusions, and the limits of their findings or their areas of competence. They must be willing to admit error, even at the cost of professional or political embarrassment.

In short, neither scientists nor the rest of us can treat science like a black box. But once we’ve pried the black box open, it becomes a lot harder to shut out the judgments and opinions of laymen. We have to accept that, like the pursuit of knowledge itself, translating knowledge into action is a messy affair, especially in our fractious democratic culture—an unholy mix of evidence, reasoning, ignorance, value conflicts, disagreements, skepticism, and trust. But such is precisely what it means to rely on others.

The American Mind presents a range of perspectives. Views are writers’ own and do not necessarily represent those of The Claremont Institute.

The American Mind is a publication of the Claremont Institute, a non-profit 501(c)(3) organization, dedicated to restoring the principles of the American Founding to their rightful, preeminent authority in our national life. Interested in supporting our work? Gifts to the Claremont Institute are tax-deductible.

Transhumanism is the accelerationist endpoint of liberalism.

A Spielbergian Fantasy Turns Dark

Notes from the convention that wasn’t.

All hail our globalist overlords.